{{incident.created_at | date: \"%Y-%m-%d\"}} - {{incident.title}}

\nLeadup

\nDescribe the circumstances that led to this incident

\n

Fault

\nDescribe what failed to work as expected

\n

Detection

\nDescribe how the incident was detected

\n

Root causes

\nRun a 5-whys analysis to understand the true causes of the incident

\n

Mitigation and resolution

\nWhat steps did you take to resolve this incident?

\n

Lessons learnt

\nWhat went well? What could have gone better? What else did you learn?

", "published_at": null, "started_at": "2022-11-27T19:36:00.000-08:00", "mitigated_at": "2022-11-27T19:44:32.156-08:00", "resolved_at": "2022-11-27T19:44:32.156-08:00", "cancelled_at": null, "show_timeline": true, "show_timeline_starred_only": false, "show_timeline_genius": true, "show_timeline_trail": true, "show_timeline_tasks": true, "show_timeline_action_items": true, "show_functionalities_impacted": true, "show_services_impacted": true, "show_groups_impacted": true, "show_action_items": true, "created_at": "2022-11-27T19:36:49.779-08:00", "updated_at": "2022-11-27T19:44:32.177-08:00" } } ``` ## pulse.\* ```json JSON { "event: : { "id": "9839c4ca-5e7b-416d-ad95-d09ae0c8eead", "type": "pulse.created", "issued_at": "2022-11-27T19:15:30.995-08:00", }, "data": { "id": "aa1cab03-00e7-4578-b1ae-72ad9ae417c6", "team_id": 1, "pulse_trail_id": "b59bfcb7-89ed-4641-b288-5ce1bb3dd801", "summary": "Deployed to Kubernetes", "labels": [ { "key": "label1", "value": "value1" }, { "key": "label2", "value": "value2" } ], "data": { "hello": "world" }, "external_id": null, "started_at": "2022-11-27T19:15:30.995-08:00", "ended_at": null, "deleted_at": null, "created_at": "2022-11-27T19:15:30.995-08:00", "updated_at": "2022-11-27T19:15:30.995-08:00", "webhook_type": null, "webhook_id": null, "webhook_idempotency_key": null, "external_url": null, "source": "k8s", "refs": [ { "key": "image", "value": "registry.rootly.com/rootly/my-service:cd6214" } ] } } ``` # Example Usage With Incidents Source: https://docs.rootly.com/configuration/example-usage-with-incidents Let's show how we can append a playbook to a newly created incident. If I was in slack for example I would do this by clicking the "Update" button (Seen below).  Let's say this was a security related incident. Once I append "Security" to the types field that would then automatically attach the related playbooks that were associated with the "Security" types.  As you can see below the action items has increased from 3 to 6 as we have appended the Security type related action items. Clicking the Action Items button gives us a full list of all the item actions.   You can also modify incidents in the Rootly UI as well by navigating to the Incidents tab and clicking edit on the appropriate incident. Modifying incidents in the UI will also automatically attach any relevant playbooks associated with the changes.  # Overview Source: https://docs.rootly.com/configuration/forms-and-fields Rootly's Form page serves as a central hub for managing incident forms and incident property fields. Not everyone has the same incident response process and requirements, Rootly enables teams to create, customize, and organize incident forms and property fields specific to their unique requirements. You can access the Forms & Fields page by navigating to **Configurations >** [**Forms**](https://rootly.com/account/forms). In this page, you can customize built-in forms or create your own for a fully customized experience.  # **Built-In Forms** Rootly comes with a series of built-in forms that cannot be deleted. However, they can be edited to collect different incident data properties specifically to your procedural needs. To learn about how to customize built-in forms, please see the [Built-In Forms](/configuration/built-in-forms) page. # **Custom Forms** If additional forms are required, Rootly allows teams to define their own forms that can be triggered either by Slack command or Slack block buttons. To learn about how to create and manage custom forms, please see the [Custom Forms](/configuration/custom-forms) page. # **Built-In Fields** Rootly comes with a series of built-in incident properties that are typically collected during incident responses. To learn about what can be customized and how to customize built-in fields, please see the [Built-In Fields](/configuration/built-in-fields) page. # **Custom Fields** If the built-in properties are not enough to address your requirements, Rootly offers the ability to create custom incident properties in various data types. To learn about how to create custom fields, please see the [Custom Fields](/configuration/custom-fields) page. # **Support** If you need help or more information about this page, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**. # Functionalities Source: https://docs.rootly.com/configuration/functionalities # Overview[](#_tHiw) *Functionality* allows you to specify the impacted features during an incident. This can help you with identifying which responders to bring in, which on-call to page, which customers to inform, etc. Individual functionalities can be mapped to your status pages.  # Field Type[](#uCTSC) *Functionality* can be customized to be either a **select** or **multi-select** field type. This means you can configure it to allow only one functionalities value to be selected per incident or allow multiple functionality values to be selected for a single incident.  # Attributes[](#6AaFi) *Functionalities* can be configured with the following attributes. Each functionality attribute can be referenced via Liquid syntax.

Submitting a request through Slack is the quickest way to surface your demands to our team.  **2. Fill out the modal with details regarding your request/bug**  # Getting Help Source: https://docs.rootly.com/getting-help To learn more about the features in Rootly, check out our [product walkthrough](https://docs.rootly.com/introduction-to-rootly) or [book a demo](https://rootly.com/demo) for a more personalized tour. You can share feedback and submit questions to our support team by emailing [support@rootly.com](mailto:support@rootly.com) or using the slash command **/rootly support** in Slack. # Overview Source: https://docs.rootly.com/help-and-documentation Welcome to Rootly's Help and Support site. This is the place to come find the information you need and answer your questions about using and configuring Rootly. You can use the navigation bar to the left to quickly jump to pages you want to view. If you're not sure what you're looking for, try the search bar at the top of the page. If you can't find what you need in the docs, see our [Help and Support](https://rootly.com/help "Help and Support") page for how to get more information when you need it.

To learn more about the features available to you in Rootly.

Up and running in about 15 minutes!

Incident management is at the heart of how Rootly works.

Timelines track everything that happens during an incident in an easy to follow format.

If not resolved, `now - {{ incident.started_at }}` | # Web Creating A Sub Incident Source: https://docs.rootly.com/incidents/web-creating-a-sub-incident **How** 1. In any incident clicking the dropdown arrow for the incident status button will show an option to create a sub incident. This option will not show for incidents that are already sub incidents.  2\. Clicking the option will bring up an incident creation form. Upon completing the form a new sub incident will be created from the incident you proc'ed the Sub incident option from.  # Web Marking As Duplicate Source: https://docs.rootly.com/incidents/web-marking-as-duplicate

Create an Oauth Application at [https://airtable.com/create/oauth](https://airtable.com/create/oauth "https://airtable.com/create/oauth")

Create an Oauth Application at [https://airtable.com/create/oauth](https://airtable.com/create/oauth "https://airtable.com/create/oauth")

With as callback url: `https://rootly.com/auth/airtable/callback`

With as callback url: `https://rootly.com/auth/airtable/callback`

## Permissions

Check the following scopes:

## Permissions

Check the following scopes:

## Settings

Copy your `client_id` and `client_secret` into rootly

## Settings

Copy your `client_id` and `client_secret` into rootly

## Fields mapping[](#7z98s)

You can configure column mapping using our custom variables [Incident Variables](/liquid/incident-variables).

```json

{

"Name": "{{incident.title}}",

"Notes": "{{incident.summary}}",

"Started At": "{{incident.started_at | date: '%FT%T%:z' }}",

"Link": "{{incident.url}}"

}

```

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Prometheus Alertmanager

Source: https://docs.rootly.com/integrations/alertmanager-integration

## Why

Prometheus [Alertmanager](https://github.com/prometheus/alertmanager "Alertmanager") will let you send a webhook to Rootly as an incoming alert. The incoming alert can then be used to either create an incident, notify channels, or page on-call targets.

## Installation

Locate **Alertmanager** on the [Integrations catalogue](https://rootly.com/account/integrations "Integrations catalogue") and select `Setup`. You will be presented with the following pop-up.

## Fields mapping[](#7z98s)

You can configure column mapping using our custom variables [Incident Variables](/liquid/incident-variables).

```json

{

"Name": "{{incident.title}}",

"Notes": "{{incident.summary}}",

"Started At": "{{incident.started_at | date: '%FT%T%:z' }}",

"Link": "{{incident.url}}"

}

```

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Prometheus Alertmanager

Source: https://docs.rootly.com/integrations/alertmanager-integration

## Why

Prometheus [Alertmanager](https://github.com/prometheus/alertmanager "Alertmanager") will let you send a webhook to Rootly as an incoming alert. The incoming alert can then be used to either create an incident, notify channels, or page on-call targets.

## Installation

Locate **Alertmanager** on the [Integrations catalogue](https://rootly.com/account/integrations "Integrations catalogue") and select `Setup`. You will be presented with the following pop-up.

### Receiving General Alerts

In order to send general (non-paging) alerts to Rootly, you'll need to modify the `alert-manager.yml` configuration file as shown below:

```YAML

route:

receiver: default

group_by:

- job

routes:

- receiver: rootly

match:

alertname: Rootly

repeat_interval: 1m

receivers:

- name: rootly

webhook_configs:

- url: 'https://webhooks.rootly.com/webhooks/incoming/alertmanager_webhooks'

send_resolved: true

http_config:

authorization:

type: Bearer

credentials: a0b9fcad1aae0689cfa05c17df497b2bc5c56d26d3e253503438864dbd6697ee

```

Copy the *Webhook URL* field and *secret* from the pop-up above, and set it as the `url` and `credentials` parameters, respectively.

### Receiving General Alerts

In order to send general (non-paging) alerts to Rootly, you'll need to modify the `alert-manager.yml` configuration file as shown below:

```YAML

route:

receiver: default

group_by:

- job

routes:

- receiver: rootly

match:

alertname: Rootly

repeat_interval: 1m

receivers:

- name: rootly

webhook_configs:

- url: 'https://webhooks.rootly.com/webhooks/incoming/alertmanager_webhooks'

send_resolved: true

http_config:

authorization:

type: Bearer

credentials: a0b9fcad1aae0689cfa05c17df497b2bc5c56d26d3e253503438864dbd6697ee

```

Copy the *Webhook URL* field and *secret* from the pop-up above, and set it as the `url` and `credentials` parameters, respectively.

## API Reference

Read the complete [API Reference documentation](https://rootly.com/api "API Reference documentation").

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Installation

Source: https://docs.rootly.com/integrations/asana/installation

## Why

**Asana** Integration allows you to:

* Creating an incident in **Rootly** will create a task in **Asana** if you choose to.

* Creating an action item in **Rootly** will create a task in **Asana** if you choose to. Attached as **subtasks** if incident has been created in **Asana** as well.

* **Changing** incident **title, description,** **status** in **Rootly** will update the task in **Asana.**

* **Changing** action item **title, description,** **status** in **Rootly** will update the task in **Asana.**

* **Changing** Asana incident issue attributes **will not** update incident attributes in **Rootly**.

* **Changing** Asana action item issue status **will not** update action item status in **Rootly**. ( Coming soon )

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

## API Reference

Read the complete [API Reference documentation](https://rootly.com/api "API Reference documentation").

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Installation

Source: https://docs.rootly.com/integrations/asana/installation

## Why

**Asana** Integration allows you to:

* Creating an incident in **Rootly** will create a task in **Asana** if you choose to.

* Creating an action item in **Rootly** will create a task in **Asana** if you choose to. Attached as **subtasks** if incident has been created in **Asana** as well.

* **Changing** incident **title, description,** **status** in **Rootly** will update the task in **Asana.**

* **Changing** action item **title, description,** **status** in **Rootly** will update the task in **Asana.**

* **Changing** Asana incident issue attributes **will not** update incident attributes in **Rootly**.

* **Changing** Asana action item issue status **will not** update action item status in **Rootly**. ( Coming soon )

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Workflows

Source: https://docs.rootly.com/integrations/asana/workflows

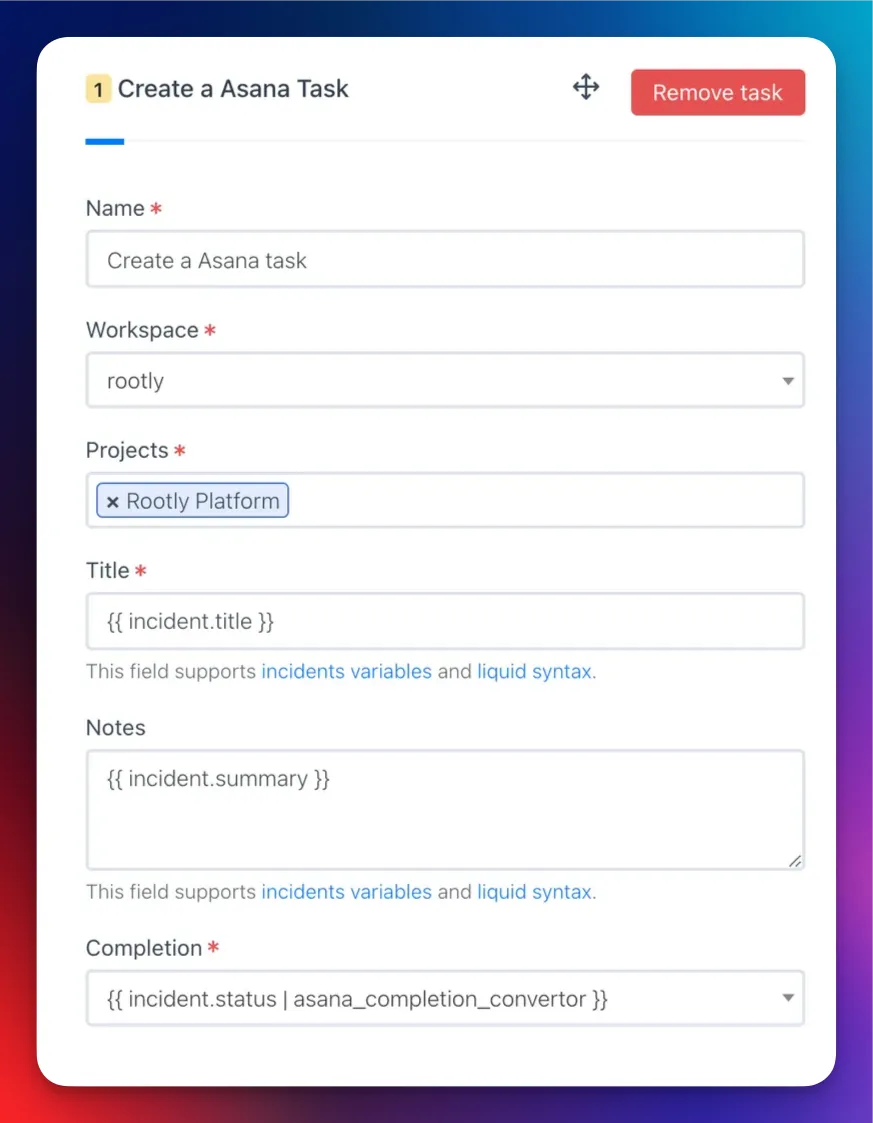

## Create a Asana Task

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Workflows

Source: https://docs.rootly.com/integrations/asana/workflows

## Create a Asana Task

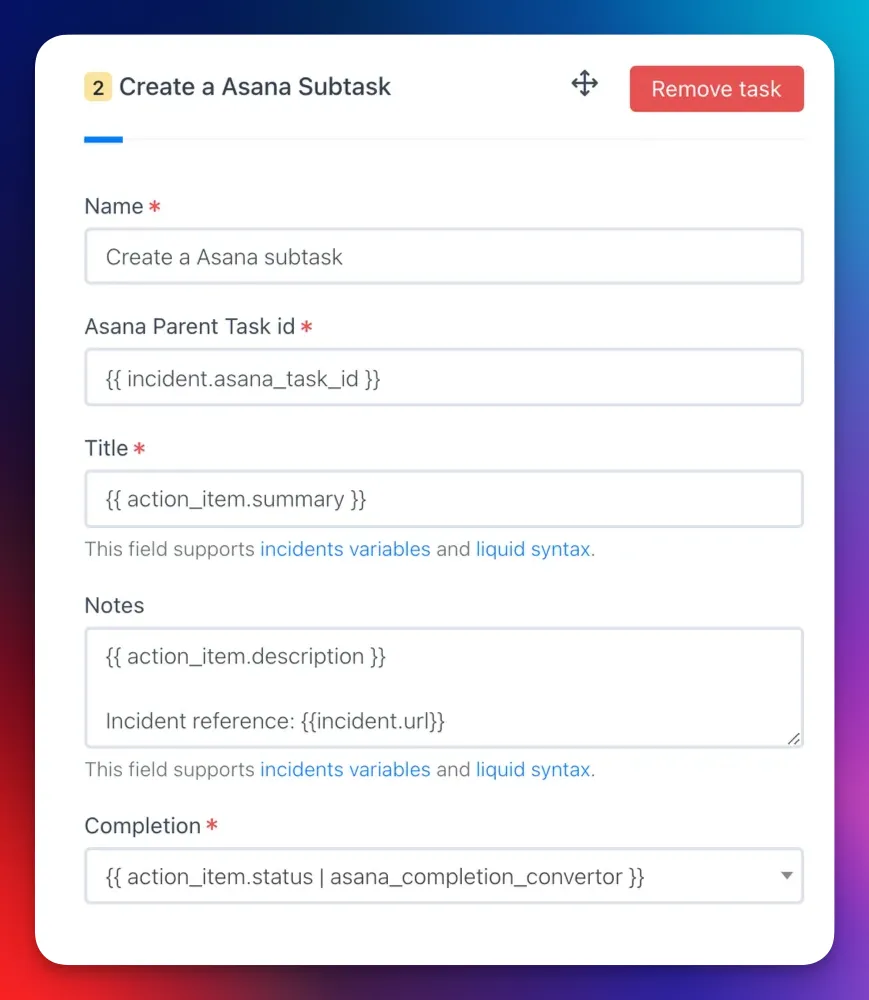

## Create a Asana Subtask

## Create a Asana Subtask

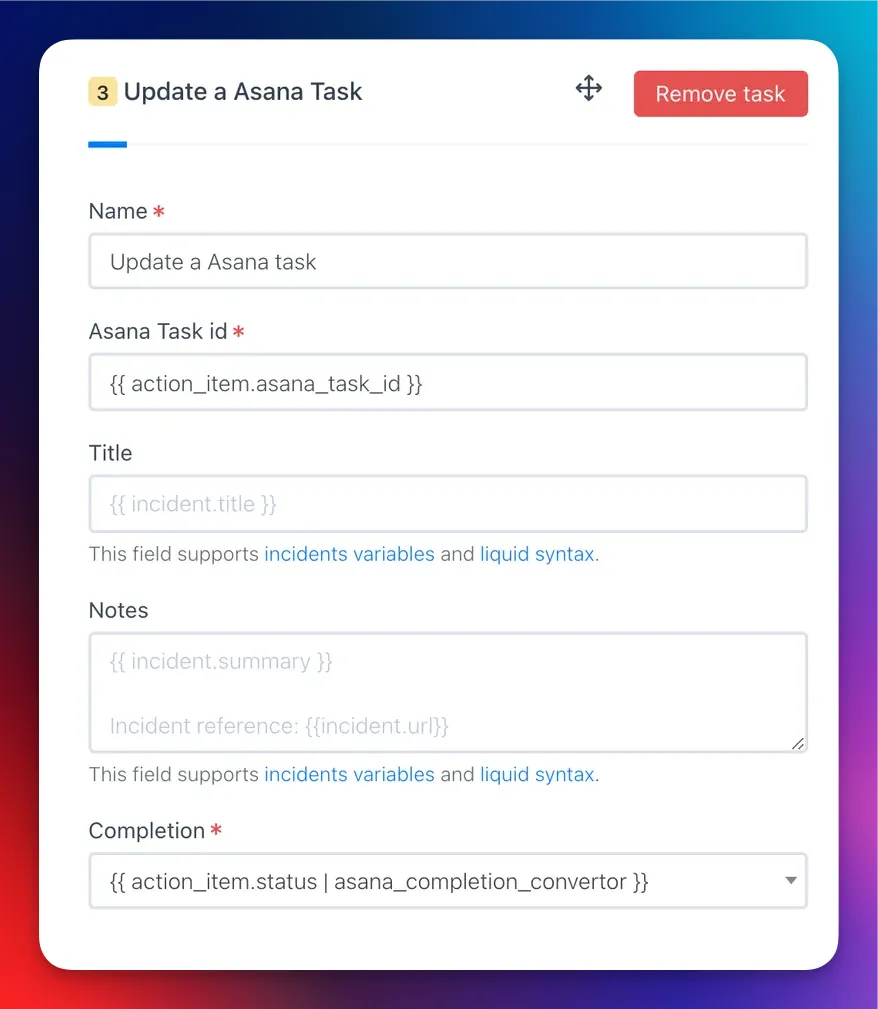

## Update a Asana Task

## Update a Asana Task

## Custom fields

```JSON

"custom_fields": {

"4578152156": "Not Started", // For textfield type

"5678904321": "On Hold", // For textfield type

"5678904322": "1004598149" // For single enum type

"5678904322": ["459021796", "1004598149"] // For multi enum type

},

"custom_fields": {

"4578152156": "{{ incident.severity }}", // For textfield type

"5678904321": "{{ incident.status }}", // For textfield type

},

"custom_fields": {

"4578152156": "{{ incident.severity }}", // For textfield type

// For single enum type

{% if incident.severity == "sev0" %}

"5678904322": "1004598149" // Custom Field ID <=> Enum ID

{% elsif incident.severity == "sev1" %}

"5678904322": "1004598149" // Custom Field ID <=> Enum ID

{% endif %}

},

```

# AWS Elastic Beanstalk

Source: https://docs.rootly.com/integrations/aws-elastic-beanstalk

## Installation

* Create a `.ebextensions/rootly.config` file. (The name does not have to be 'rootly'.)

* Put this content into the file (Adapt for your use case)

```Shell Shell

files:

"/opt/elasticbeanstalk/hooks/appdeploy/pre/01rootly.sh" :

mode: "000775"

owner: root

group: users

content: |

#!/bin/bash

rootly_api_key="$(/opt/elasticbeanstalk/bin/get-config container -k rootly_api_key)";

environment="$(/opt/elasticbeanstalk/bin/get-config container -k environment)";

service="$(/opt/elasticbeanstalk/bin/get-config container -k service)";

labels="key=value,key2=value2"

# install rootly cli

curl -fsSL https://raw.githubusercontent.com/rootly-io/cli/main/install.sh | sh

# log a pulse

rootly pulse --api-key "${rootly_api_key}" --quiet --environments "${environment}" --services "${service}" --labels "${labels}" Deploy in progress...

```

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Backstage

Source: https://docs.rootly.com/integrations/backstage/installation

## Rootly plugin for Backstage

Cf. [https://github.com/rootlyhq/backstage-plugin](https://github.com/rootlyhq/backstage-plugin "https://github.com/rootlyhq/backstage-plugin")

## License

This library is under the MIT license.

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

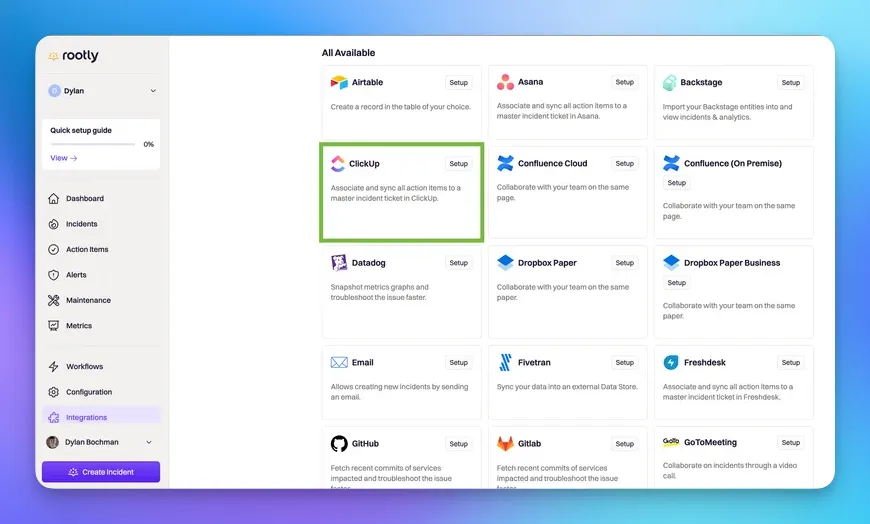

# Installation

Source: https://docs.rootly.com/integrations/clickup/installation

## Why

**ClickUp** Integration allows you to:

* Creating an incident in **ClickUp** will create a task in **ClickUp** if you choose to.

* Creating an action item in **ClickUp** will create a task in **ClickUp** if you choose to. Attached as **subtasks** if incident has been created in **ClickUp** as well.

* **Changing** task **title, description,** **status** in **Rootly** will update the task in **ClickUp.**

* **Changing** action item **title, description,** **status** in **Rootly** will update the task in **ClickUp.**

## Installation

Locate **ClickUp** on on the [Integrations catalogue](https://rootly.com/account/integrations "Integrations catalogue") and select Setup.

## Custom fields

```JSON

"custom_fields": {

"4578152156": "Not Started", // For textfield type

"5678904321": "On Hold", // For textfield type

"5678904322": "1004598149" // For single enum type

"5678904322": ["459021796", "1004598149"] // For multi enum type

},

"custom_fields": {

"4578152156": "{{ incident.severity }}", // For textfield type

"5678904321": "{{ incident.status }}", // For textfield type

},

"custom_fields": {

"4578152156": "{{ incident.severity }}", // For textfield type

// For single enum type

{% if incident.severity == "sev0" %}

"5678904322": "1004598149" // Custom Field ID <=> Enum ID

{% elsif incident.severity == "sev1" %}

"5678904322": "1004598149" // Custom Field ID <=> Enum ID

{% endif %}

},

```

# AWS Elastic Beanstalk

Source: https://docs.rootly.com/integrations/aws-elastic-beanstalk

## Installation

* Create a `.ebextensions/rootly.config` file. (The name does not have to be 'rootly'.)

* Put this content into the file (Adapt for your use case)

```Shell Shell

files:

"/opt/elasticbeanstalk/hooks/appdeploy/pre/01rootly.sh" :

mode: "000775"

owner: root

group: users

content: |

#!/bin/bash

rootly_api_key="$(/opt/elasticbeanstalk/bin/get-config container -k rootly_api_key)";

environment="$(/opt/elasticbeanstalk/bin/get-config container -k environment)";

service="$(/opt/elasticbeanstalk/bin/get-config container -k service)";

labels="key=value,key2=value2"

# install rootly cli

curl -fsSL https://raw.githubusercontent.com/rootly-io/cli/main/install.sh | sh

# log a pulse

rootly pulse --api-key "${rootly_api_key}" --quiet --environments "${environment}" --services "${service}" --labels "${labels}" Deploy in progress...

```

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Backstage

Source: https://docs.rootly.com/integrations/backstage/installation

## Rootly plugin for Backstage

Cf. [https://github.com/rootlyhq/backstage-plugin](https://github.com/rootlyhq/backstage-plugin "https://github.com/rootlyhq/backstage-plugin")

## License

This library is under the MIT license.

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Installation

Source: https://docs.rootly.com/integrations/clickup/installation

## Why

**ClickUp** Integration allows you to:

* Creating an incident in **ClickUp** will create a task in **ClickUp** if you choose to.

* Creating an action item in **ClickUp** will create a task in **ClickUp** if you choose to. Attached as **subtasks** if incident has been created in **ClickUp** as well.

* **Changing** task **title, description,** **status** in **Rootly** will update the task in **ClickUp.**

* **Changing** action item **title, description,** **status** in **Rootly** will update the task in **ClickUp.**

## Installation

Locate **ClickUp** on on the [Integrations catalogue](https://rootly.com/account/integrations "Integrations catalogue") and select Setup.

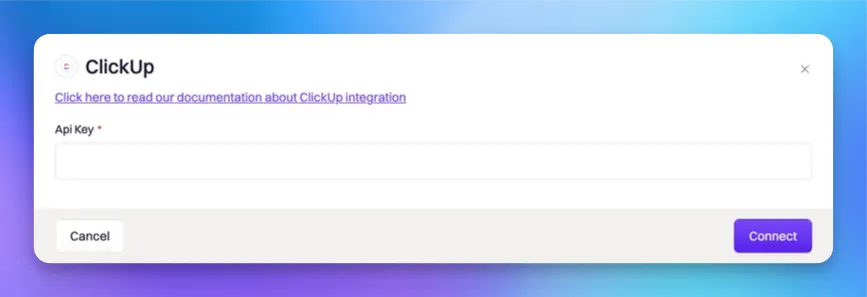

You'll be presented with the following installation page. Please generate a [ClickUp API Token](https://clickup.com/api/developer-portal/authentication/#generate-your-personal-api-token "ClickUp API Token") and paste it into the API Key field before you confirm the installation by selecting `Connect`.

You'll be presented with the following installation page. Please generate a [ClickUp API Token](https://clickup.com/api/developer-portal/authentication/#generate-your-personal-api-token "ClickUp API Token") and paste it into the API Key field before you confirm the installation by selecting `Connect`.

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Workflows

Source: https://docs.rootly.com/integrations/clickup/workflows

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

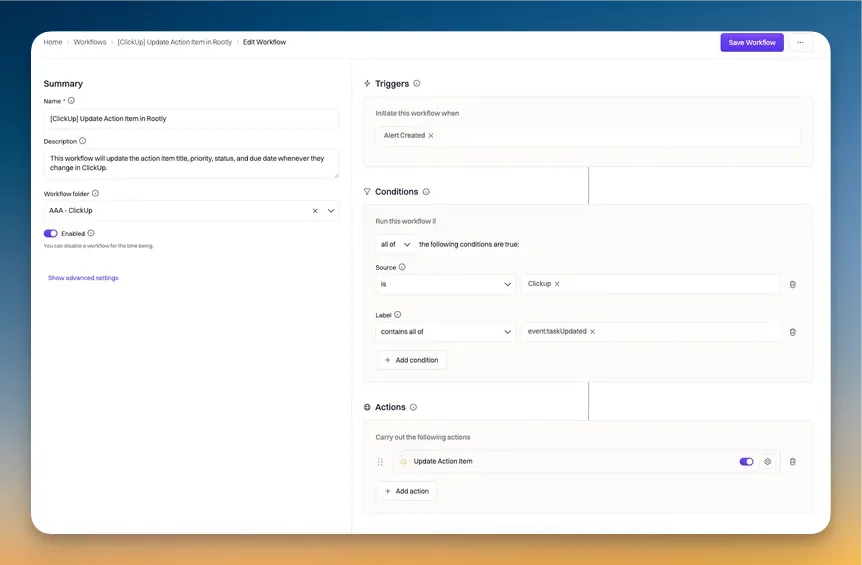

# Workflows

Source: https://docs.rootly.com/integrations/clickup/workflows

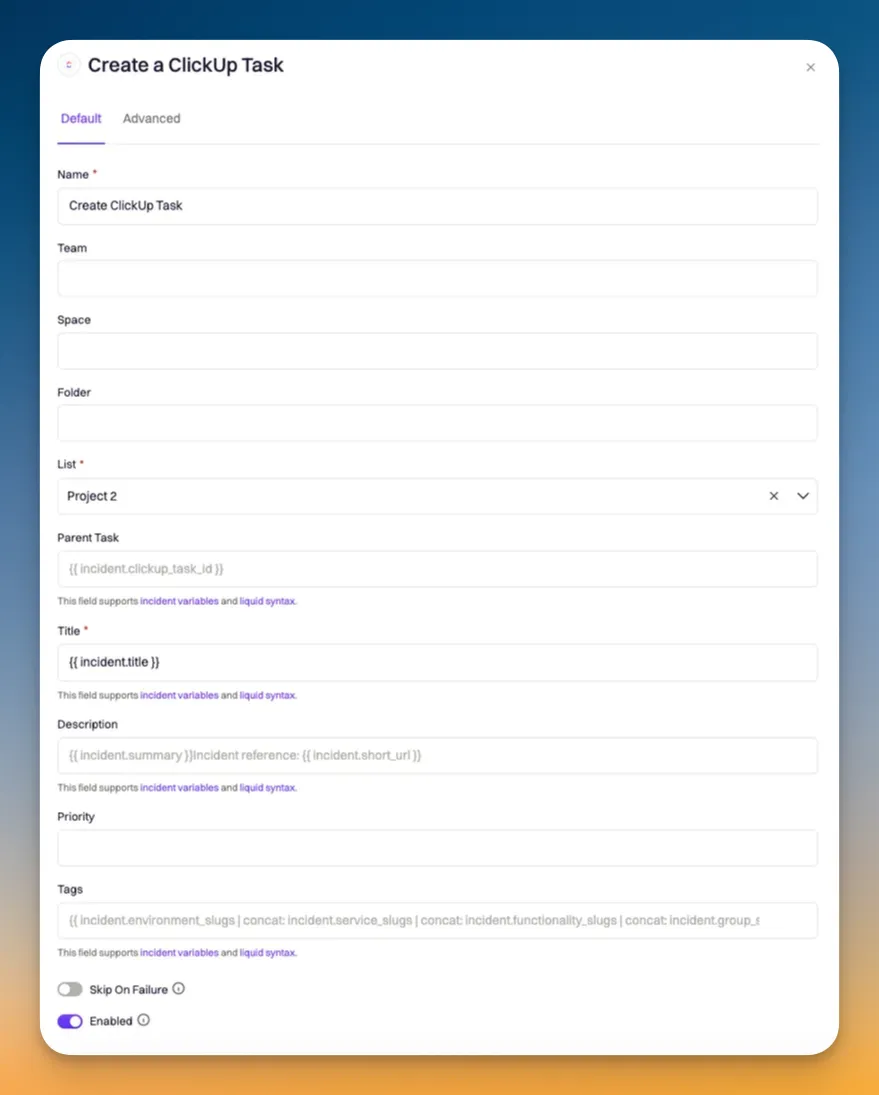

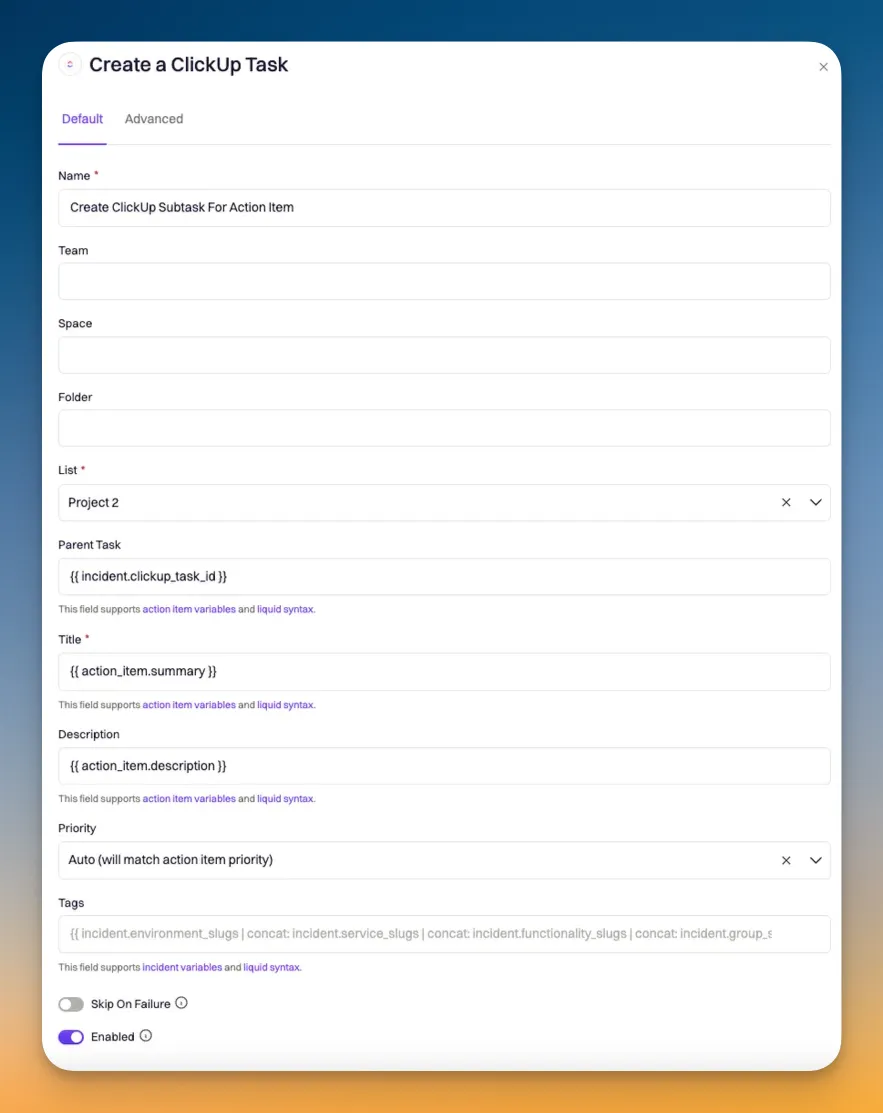

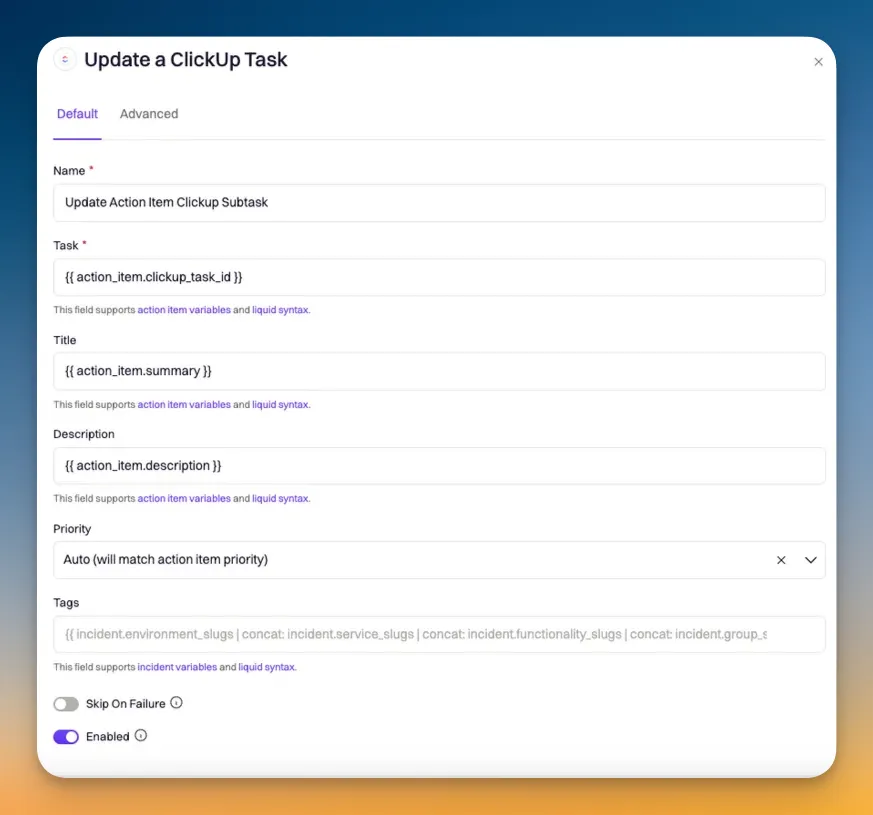

### Create a ClickUp Subtask for Action Item

### Create a ClickUp Subtask for Action Item

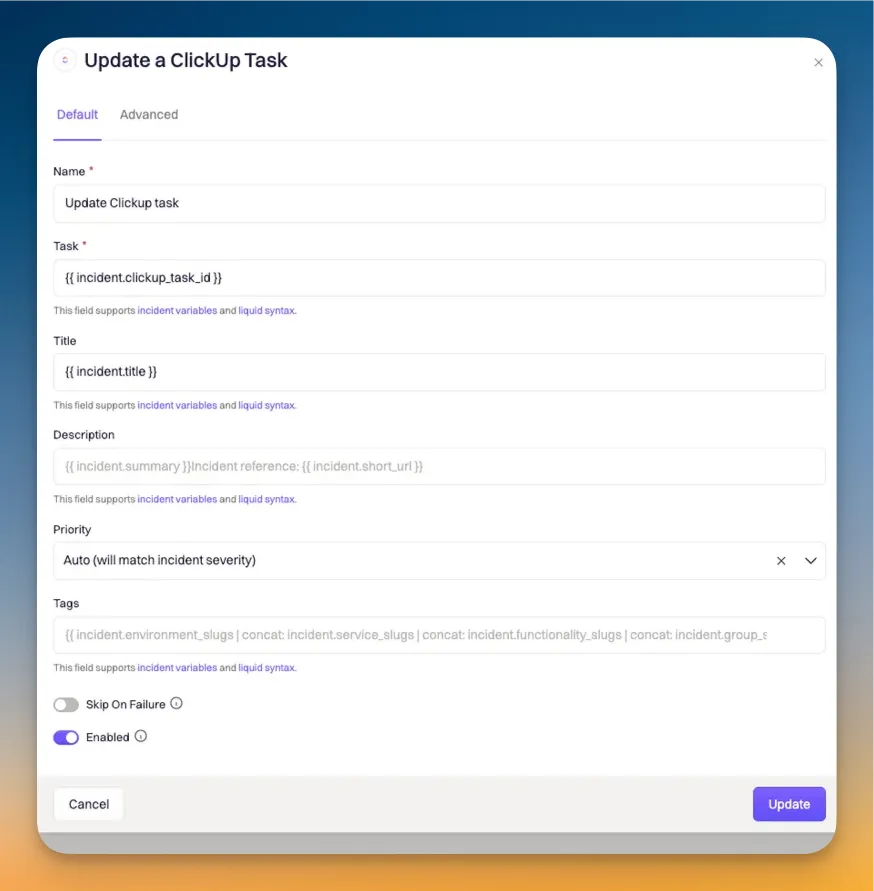

### Update a ClickUp Task

### Update a ClickUp Subtask

### Update a ClickUp Task

### Update a ClickUp Subtask

### Update a ClickUp Subtask

### Update a ClickUp Subtask

## ClickUp --> Rooly Workflows

## ClickUp --> Rooly Workflows

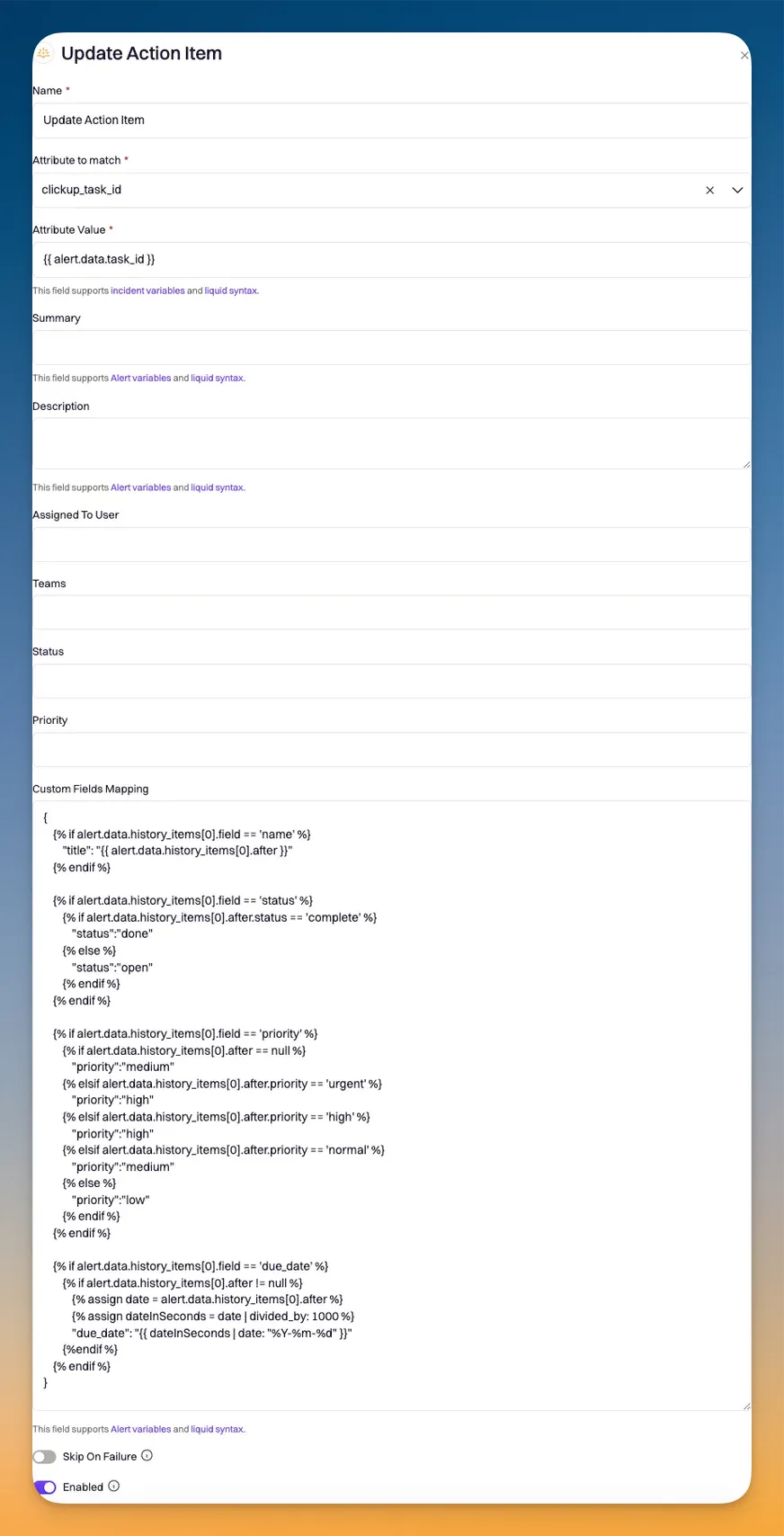

### Data Mapping Syntax

```JSON

{

{% if alert.data.history_items[0].field == 'name' %}

"title": "{{ alert.data.history_items[0].after }}"

{% endif %}

{% if alert.data.history_items[0].field == 'status' %}

{% if alert.data.history_items[0].after.status == 'complete' %}

"status":"done"

{% else %}

"status":"open"

{% endif %}

{% endif %}

{% if alert.data.history_items[0].field == 'priority' %}

{% if alert.data.history_items[0].after == null %}

"priority":"medium"

{% elsif alert.data.history_items[0].after.priority == 'urgent' %}

"priority":"high"

{% elsif alert.data.history_items[0].after.priority == 'high' %}

"priority":"high"

{% elsif alert.data.history_items[0].after.priority == 'normal' %}

"priority":"medium"

{% else %}

"priority":"low"

{% endif %}

{% endif %}

{% if alert.data.history_items[0].field == 'due_date' %}

{% if alert.data.history_items[0].after != null %}

{% assign date = alert.data.history_items[0].after %}

{% assign dateInSeconds = date | divided_by: 1000 %}

"due_date": "{{ dateInSeconds | date: "%Y-%m-%d" }}"

{%endif %}

{% endif %}

}

```

# Confluence

Source: https://docs.rootly.com/integrations/confluence/confluence

## Overview

Rooty's Confluence integration helps streamline the process of completing incident retrospectives. By leveraging pre-built templates and workflows, teams can automate their retrospective creation - saving hours of manual work, per incident.

Some key features of this integration includes:

1. **Customizable templates**

* Rootly enables teams to pre-define retrospective templates in both Rootly and Confluence. If you're looking for a semi-custom retrospective that follows industry best practices, you can simply define the retrospective body in Rootly and we will take care of the rest. If you want a fully customized retrospective, you can build your template in Confluence and we will generate the retrospective accordingly.

2. **Liquid variable support**

* Liquid variables can be referenced in both templates created in Rootly or Confluence.

3. **Timeline Visualization:**

* Incident timelines are automatically generated for templates created in Rootly.

* [Custom Liquid syntax](/liquid/incident-variables) can be used to generate timelines for templates created in Confluence

4. **Action Item Tracking:**

* Follow-ups are automatically recorded when Rootly-hosted templates are used.

* [Custom Liquid syntax](/liquid/incident-variables "Custom Liquid syntax") can be used to list out follow-ups in templates hosted on Confluence.

## Installation

Please see the [**Installation** page](/integrations/confluence/installation "Installation") to get started with your Confluence integration.

## Workflows

Rootly relies on workflows to automate interactions with Confluence. The [**Workflows** page](/integrations/confluence/workflows "Workflows") will walk you through how to set up commonly used workflows involving Confluence.

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Installation

Source: https://docs.rootly.com/integrations/confluence/installation

## Installing Confluence on Rootly

### Data Mapping Syntax

```JSON

{

{% if alert.data.history_items[0].field == 'name' %}

"title": "{{ alert.data.history_items[0].after }}"

{% endif %}

{% if alert.data.history_items[0].field == 'status' %}

{% if alert.data.history_items[0].after.status == 'complete' %}

"status":"done"

{% else %}

"status":"open"

{% endif %}

{% endif %}

{% if alert.data.history_items[0].field == 'priority' %}

{% if alert.data.history_items[0].after == null %}

"priority":"medium"

{% elsif alert.data.history_items[0].after.priority == 'urgent' %}

"priority":"high"

{% elsif alert.data.history_items[0].after.priority == 'high' %}

"priority":"high"

{% elsif alert.data.history_items[0].after.priority == 'normal' %}

"priority":"medium"

{% else %}

"priority":"low"

{% endif %}

{% endif %}

{% if alert.data.history_items[0].field == 'due_date' %}

{% if alert.data.history_items[0].after != null %}

{% assign date = alert.data.history_items[0].after %}

{% assign dateInSeconds = date | divided_by: 1000 %}

"due_date": "{{ dateInSeconds | date: "%Y-%m-%d" }}"

{%endif %}

{% endif %}

}

```

# Confluence

Source: https://docs.rootly.com/integrations/confluence/confluence

## Overview

Rooty's Confluence integration helps streamline the process of completing incident retrospectives. By leveraging pre-built templates and workflows, teams can automate their retrospective creation - saving hours of manual work, per incident.

Some key features of this integration includes:

1. **Customizable templates**

* Rootly enables teams to pre-define retrospective templates in both Rootly and Confluence. If you're looking for a semi-custom retrospective that follows industry best practices, you can simply define the retrospective body in Rootly and we will take care of the rest. If you want a fully customized retrospective, you can build your template in Confluence and we will generate the retrospective accordingly.

2. **Liquid variable support**

* Liquid variables can be referenced in both templates created in Rootly or Confluence.

3. **Timeline Visualization:**

* Incident timelines are automatically generated for templates created in Rootly.

* [Custom Liquid syntax](/liquid/incident-variables) can be used to generate timelines for templates created in Confluence

4. **Action Item Tracking:**

* Follow-ups are automatically recorded when Rootly-hosted templates are used.

* [Custom Liquid syntax](/liquid/incident-variables "Custom Liquid syntax") can be used to list out follow-ups in templates hosted on Confluence.

## Installation

Please see the [**Installation** page](/integrations/confluence/installation "Installation") to get started with your Confluence integration.

## Workflows

Rootly relies on workflows to automate interactions with Confluence. The [**Workflows** page](/integrations/confluence/workflows "Workflows") will walk you through how to set up commonly used workflows involving Confluence.

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Installation

Source: https://docs.rootly.com/integrations/confluence/installation

## Installing Confluence on Rootly

You'll be prompted to grant Rootly permission to integrate with your Confluence account. Once confirmed, the installation is considered complete!

You'll be prompted to grant Rootly permission to integrate with your Confluence account. Once confirmed, the installation is considered complete!

## Setting Up Retro Templates in Confluence

Once logged into Confluence, select the ***Space*** in which you want to create the retrospective template in.

## Setting Up Retro Templates in Confluence

Once logged into Confluence, select the ***Space*** in which you want to create the retrospective template in.

Select ***Space Settings***.

Select ***Space Settings***.

Select ***Templates*** under Look and Feel.

Select ***Templates*** under Look and Feel.

Click on `Create New Template`.

Click on `Create New Template`.

Now you can build out your custom template! Liquid syntax is supported.

Now you can build out your custom template! Liquid syntax is supported.

# Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete.**

# Workflows

Source: https://docs.rootly.com/integrations/confluence/workflows

## Overview

Our Confluence Cloud integration leverages workflows to automatically generate retrospective pages in Confluence. If you are unfamiliar with how Workflows function please visit our [Workflows](/workflows "Workflows") documentation first.

# Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete.**

# Workflows

Source: https://docs.rootly.com/integrations/confluence/workflows

## Overview

Our Confluence Cloud integration leverages workflows to automatically generate retrospective pages in Confluence. If you are unfamiliar with how Workflows function please visit our [Workflows](/workflows "Workflows") documentation first.

## Available Workflow Actions

### Create a Confluence Page

This action allows you to create an incident retrospective into a Confluence Page.

## Available Workflow Actions

### Create a Confluence Page

This action allows you to create an incident retrospective into a Confluence Page.

#### Name

This field is automatically set for you. You can rename this field to whatever best describes your action. The value in this field does not affect how the workflow action behaves.

#### Space Key

This field allows you to enter the Confluence `Space Key` in which the retrospective will be created into.

To find the key for your Confluence Space, you'll need to go to **Space Settings > Space Details** in your Confluence instance.

#### Name

This field is automatically set for you. You can rename this field to whatever best describes your action. The value in this field does not affect how the workflow action behaves.

#### Space Key

This field allows you to enter the Confluence `Space Key` in which the retrospective will be created into.

To find the key for your Confluence Space, you'll need to go to **Space Settings > Space Details** in your Confluence instance.

#### Ancestor

This field allows you to enter the Confluence `Page Key` in which the retrospective will be created under. To learn how to obtain a `Page Key`, please see instructions [here](https://confluence.atlassian.com/confkb/how-to-get-confluence-page-id-648380445.html "here").

#### Title

This field allows you to define the title of the Confluence page. You can use `{{ incident.title }}` to match the title of your incident. This field supports Liquid syntax.

#### Ancestor

This field allows you to enter the Confluence `Page Key` in which the retrospective will be created under. To learn how to obtain a `Page Key`, please see instructions [here](https://confluence.atlassian.com/confkb/how-to-get-confluence-page-id-648380445.html "here").

#### Title

This field allows you to define the title of the Confluence page. You can use `{{ incident.title }}` to match the title of your incident. This field supports Liquid syntax.

# Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Cortex

Source: https://docs.rootly.com/integrations/cortex

Cortex is an Internal Developer Portal.

The Rootly + Cortex integration allows you to manage and organize incidents with efficiency and ease.

The unique combination of Cortex's **in-depth service insights** and Rootly's **automated incident management** eliminates the friction of context-switching and ensures your teams have the right information at the right time.

### Features and Benefits

**Trigger an incident directly from Cortex** - Identify service issues and escalate them to Rootly without switching tools, keeping response times low.

**Automated Incident Routing** - Ensure incident alerts immediately reach the right team via Cortex's automated service ownership data, eliminating delays and guesswork.

**View incident data on entity pages in Cortex** - View real-time service ownership, dependencies, and operational insights from Cortex directly within Rootly's incident workflow.

**Create Scorecards** - Create Scorecard rules and write CQL queries based on Rootly incidents.

**Comprehensive Post-Incident Analysis** - Leverage Rootly's automated retrospectives alongside Cortex's full historical service data to enhance post-incident learning and future preparedness.

**Minimize Context-Switching** - Reduce tool-hopping and keep your teams focused by consolidating incident management into one streamlined workflow.

**Unique Incident Catalog Support** - Unlike Cortex, Rootly provides built-in support for incidents within the catalog list, making it the only integration that ensures a unified view of active incidents alongside service ownership and operational data.

### **Requirements**

* Cortex admin

* Rootly admin or owner

### **Installation**

[Cortex integration setup](https://docs.cortex.io/docs/reference/integrations/rootly)

### **Support**

The Cortex team built this integration and actively manages it. For help, email [help@cortex.io](mailto:help@cortex.io).

# Alerts

Source: https://docs.rootly.com/integrations/datadog/alerts

## Overview

Datadog can be configured to send events into Rootly as alerts. The alerts received on Rootly can then be routed to a Slack channel or used to initiate incidents.

## Configure Webhook in Datadog

# Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Cortex

Source: https://docs.rootly.com/integrations/cortex

Cortex is an Internal Developer Portal.

The Rootly + Cortex integration allows you to manage and organize incidents with efficiency and ease.

The unique combination of Cortex's **in-depth service insights** and Rootly's **automated incident management** eliminates the friction of context-switching and ensures your teams have the right information at the right time.

### Features and Benefits

**Trigger an incident directly from Cortex** - Identify service issues and escalate them to Rootly without switching tools, keeping response times low.

**Automated Incident Routing** - Ensure incident alerts immediately reach the right team via Cortex's automated service ownership data, eliminating delays and guesswork.

**View incident data on entity pages in Cortex** - View real-time service ownership, dependencies, and operational insights from Cortex directly within Rootly's incident workflow.

**Create Scorecards** - Create Scorecard rules and write CQL queries based on Rootly incidents.

**Comprehensive Post-Incident Analysis** - Leverage Rootly's automated retrospectives alongside Cortex's full historical service data to enhance post-incident learning and future preparedness.

**Minimize Context-Switching** - Reduce tool-hopping and keep your teams focused by consolidating incident management into one streamlined workflow.

**Unique Incident Catalog Support** - Unlike Cortex, Rootly provides built-in support for incidents within the catalog list, making it the only integration that ensures a unified view of active incidents alongside service ownership and operational data.

### **Requirements**

* Cortex admin

* Rootly admin or owner

### **Installation**

[Cortex integration setup](https://docs.cortex.io/docs/reference/integrations/rootly)

### **Support**

The Cortex team built this integration and actively manages it. For help, email [help@cortex.io](mailto:help@cortex.io).

# Alerts

Source: https://docs.rootly.com/integrations/datadog/alerts

## Overview

Datadog can be configured to send events into Rootly as alerts. The alerts received on Rootly can then be routed to a Slack channel or used to initiate incidents.

## Configure Webhook in Datadog

You'll be asked to configure a webhook. Now, select `+ New` in Webhooks section (lower left corner of the modal).

You'll be asked to configure a webhook. Now, select `+ New` in Webhooks section (lower left corner of the modal).

Once we have located the Webhook within Datadog, we would need to configure the Webhook to point to the following URL:

```txt URL

https://webhooks.rootly.com/webhooks/incoming/datadog_webhooks

```

You'll be promoted to enter the following details for the webhook.

Once we have located the Webhook within Datadog, we would need to configure the Webhook to point to the following URL:

```txt URL

https://webhooks.rootly.com/webhooks/incoming/datadog_webhooks

```

You'll be promoted to enter the following details for the webhook.

### Name

Give your webhook a representative name.

### URL

The webhook URL can be obtained from your Datadog integration page in Rootly (**Integrations** > **Datadog** > **Configure**).

### Name

Give your webhook a representative name.

### URL

The webhook URL can be obtained from your Datadog integration page in Rootly (**Integrations** > **Datadog** > **Configure**).

### Payload

#### General Alert

Copy the following code and paste it into the `Payload` field. This will result in a regular (non-paging) alert.

```json

{

"id":"$ID",

"body":"$EVENT_MSG",

"last_updated":"$LAST_UPDATED",

"event_type":"$EVENT_TYPE",

"title":"$EVENT_TITLE",

"alert_id":"$ALERT_ID",

"alert_metric":"$ALERT_METRIC",

"alert_priority":"$ALERT_PRIORITY",

"alert_query":"$ALERT_QUERY",

"alert_scope":"$ALERT_SCOPE",

"alert_status":"$ALERT_STATUS",

"alert_title":"$ALERT_TITLE",

"alert_transition":"$ALERT_TRANSITION",

"alert_type":"$ALERT_TYPE",

"date":"$DATE",

"org":{"id":"$ORG_ID","name":"$ORG_NAME"}

}

```

#### Paging Rootly On-Call

Paging through Rootly On-Call also relies on webhook alerts. The main difference being the inclusion of a `notification_target` object.

```json

"rootly": {

"notification_target": {

"type": "Service",

"id": "00acba53-b07e-455d-add6-73263209a610"

}

}

```

* `type` - this defines the Rootly resource type that will be used for paging.

* The following are the available values: `User` | `Group` (Team) | `EscalationPolicy` | `Service`

* `id` - this specifies the exact resource that will be targeted for the page.

* The `id` of the resource can be found when editing each resource.

### Payload

#### General Alert

Copy the following code and paste it into the `Payload` field. This will result in a regular (non-paging) alert.

```json

{

"id":"$ID",

"body":"$EVENT_MSG",

"last_updated":"$LAST_UPDATED",

"event_type":"$EVENT_TYPE",

"title":"$EVENT_TITLE",

"alert_id":"$ALERT_ID",

"alert_metric":"$ALERT_METRIC",

"alert_priority":"$ALERT_PRIORITY",

"alert_query":"$ALERT_QUERY",

"alert_scope":"$ALERT_SCOPE",

"alert_status":"$ALERT_STATUS",

"alert_title":"$ALERT_TITLE",

"alert_transition":"$ALERT_TRANSITION",

"alert_type":"$ALERT_TYPE",

"date":"$DATE",

"org":{"id":"$ORG_ID","name":"$ORG_NAME"}

}

```

#### Paging Rootly On-Call

Paging through Rootly On-Call also relies on webhook alerts. The main difference being the inclusion of a `notification_target` object.

```json

"rootly": {

"notification_target": {

"type": "Service",

"id": "00acba53-b07e-455d-add6-73263209a610"

}

}

```

* `type` - this defines the Rootly resource type that will be used for paging.

* The following are the available values: `User` | `Group` (Team) | `EscalationPolicy` | `Service`

* `id` - this specifies the exact resource that will be targeted for the page.

* The `id` of the resource can be found when editing each resource.

Copy the following code and paste it into the `Payload` field. This will result in both the alert appearing in Rootly and the targeted resource being paged.

```json

{

"id":"$ID",

"body":"$EVENT_MSG",

"last_updated":"$LAST_UPDATED",

"event_type": "composite_monitor",

"title":"Datadog webhook alert",

"alert_id":"$ALERT_ID",

"alert_metric":"$ALERT_METRIC",

"alert_priority":"$ALERT_PRIORITY",

"alert_query":"$ALERT_QUERY",

"alert_scope":"$ALERT_SCOPE",

"alert_status":"$ALERT_STATUS",

"alert_title":"$ALERT_TITLE",

"alert_transition":"$ALERT_TRANSITION",

"alert_type":"$ALERT_TYPE",

"date":"$DATE",

"org":{"id":"$ORG_ID","name":"$ORG_NAME"},

"rootly": {

"notification_target": {

"type": "Service",

"id": "00acba53-b07e-455d-add6-73263209a610"

}

}

}

```

### Custom Header

Check the `Custom Header` checkbox and paste the following code into the text area.

```json

{"secret":"a04d2feb4286150731a718acba564198605675ec191ef9ae7956c6e15af54edf"}

```

Replace the `secret` value with the one found in your Datadog integration in Rootly (**Integrations** > **Datadog** > **Configure**).

Copy the following code and paste it into the `Payload` field. This will result in both the alert appearing in Rootly and the targeted resource being paged.

```json

{

"id":"$ID",

"body":"$EVENT_MSG",

"last_updated":"$LAST_UPDATED",

"event_type": "composite_monitor",

"title":"Datadog webhook alert",

"alert_id":"$ALERT_ID",

"alert_metric":"$ALERT_METRIC",

"alert_priority":"$ALERT_PRIORITY",

"alert_query":"$ALERT_QUERY",

"alert_scope":"$ALERT_SCOPE",

"alert_status":"$ALERT_STATUS",

"alert_title":"$ALERT_TITLE",

"alert_transition":"$ALERT_TRANSITION",

"alert_type":"$ALERT_TYPE",

"date":"$DATE",

"org":{"id":"$ORG_ID","name":"$ORG_NAME"},

"rootly": {

"notification_target": {

"type": "Service",

"id": "00acba53-b07e-455d-add6-73263209a610"

}

}

}

```

### Custom Header

Check the `Custom Header` checkbox and paste the following code into the text area.

```json

{"secret":"a04d2feb4286150731a718acba564198605675ec191ef9ae7956c6e15af54edf"}

```

Replace the `secret` value with the one found in your Datadog integration in Rootly (**Integrations** > **Datadog** > **Configure**).

Once completed, go ahead and select `Save` to create your webhook.

## Attach Webhook to Monitor

Once your webhook is created, you need to attach it to a Datadog monitor. A monitor contains the firing logic that determines when alerts will be sent out.

Navigate to **Monitor** > **New Monitor** > **Event** to create a new monitor.

Once completed, go ahead and select `Save` to create your webhook.

## Attach Webhook to Monitor

Once your webhook is created, you need to attach it to a Datadog monitor. A monitor contains the firing logic that determines when alerts will be sent out.

Navigate to **Monitor** > **New Monitor** > **Event** to create a new monitor.

Now, you can configure your monitor to your desired firing logic. The main action to ensure that you do is **select your webhook** in the **Notify your team** section.

Now, you can configure your monitor to your desired firing logic. The main action to ensure that you do is **select your webhook** in the **Notify your team** section.

Once ready, you can select `Test Notifications` to test out your monitor and webhook. You should see a test alert appear from Datadog on your [Rootly Alerts page](https://rootly.com/account/alerts "Rootly Alerts page").

Once ready, you can select `Test Notifications` to test out your monitor and webhook. You should see a test alert appear from Datadog on your [Rootly Alerts page](https://rootly.com/account/alerts "Rootly Alerts page").

Finally, **save your monitor**. The resulting product should look something like this:

Finally, **save your monitor**. The resulting product should look something like this:

# Support[](#3bjMA)

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Datadog

Source: https://docs.rootly.com/integrations/datadog/datadog

Datadog is a SaaS based monitoring tool for engineering teams.

Integrating with Datadog allows you to:

* **Create Datadog notebooks** through workflows.

* **Retrieve Datadog graph snapshots** through workflows.

* **Retrieve Datadog dashboards** through workflows.

* **Receive Datadog alerts** to auto create incidents.

### **Requirements**

* Datadog Admin role

* Rootly Owner or Admin

### Installation

Please see the [Installation page](/integrations/datadog/installation) to get started with your Datadog integration.

### Alerts

Rootly can ingest alerts from Datadog webhooks. The [**Alerts page**](/integrations/datadog/alerts) will walk you through how to set up Datadog to send alerts to Rootly and commonly used workflows involving Datadog alerts.

### Support

The Rootly team manages this integration. For help, use the slash command **/rootly help** in Slack or email [support@rootly.com](mailto:support@rootly.com).

# Installation

Source: https://docs.rootly.com/integrations/datadog/installation

## Installing Datadog on Rootly

# Support[](#3bjMA)

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Datadog

Source: https://docs.rootly.com/integrations/datadog/datadog

Datadog is a SaaS based monitoring tool for engineering teams.

Integrating with Datadog allows you to:

* **Create Datadog notebooks** through workflows.

* **Retrieve Datadog graph snapshots** through workflows.

* **Retrieve Datadog dashboards** through workflows.

* **Receive Datadog alerts** to auto create incidents.

### **Requirements**

* Datadog Admin role

* Rootly Owner or Admin

### Installation

Please see the [Installation page](/integrations/datadog/installation) to get started with your Datadog integration.

### Alerts

Rootly can ingest alerts from Datadog webhooks. The [**Alerts page**](/integrations/datadog/alerts) will walk you through how to set up Datadog to send alerts to Rootly and commonly used workflows involving Datadog alerts.

### Support

The Rootly team manages this integration. For help, use the slash command **/rootly help** in Slack or email [support@rootly.com](mailto:support@rootly.com).

# Installation

Source: https://docs.rootly.com/integrations/datadog/installation

## Installing Datadog on Rootly

You'll be prompted to grant Rootly permission to integrate with your Dropbox Paper account. Once confirmed, the installation is considered complete!

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

# Workflows

Source: https://docs.rootly.com/integrations/dropbox-paper/workflows

## Overview

Our Dropbox Paper integration leverages workflows to automatically generate retrospective documents in Dropbox Paper. If you are unfamiliar with how Workflows function please visit our [Workflows](/workflows "Workflows") documentation first.

You'll be prompted to grant Rootly permission to integrate with your Dropbox Paper account. Once confirmed, the installation is considered complete!

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

# Workflows

Source: https://docs.rootly.com/integrations/dropbox-paper/workflows

## Overview

Our Dropbox Paper integration leverages workflows to automatically generate retrospective documents in Dropbox Paper. If you are unfamiliar with how Workflows function please visit our [Workflows](/workflows "Workflows") documentation first.

## Available Workflow Actions

### Create a Dropbox Paper

This action allows you to create a retrospective document into a Dropbox Paper folder.

## Available Workflow Actions

### Create a Dropbox Paper

This action allows you to create a retrospective document into a Dropbox Paper folder.

#### Name

This field is automatically set for you. You can rename this field to whatever best describes your action. The value in this field does not affect how the workflow action behaves.

#### Namespace

This field is **only available if you integrated with Dropbox Paper Business**. This field allows you to select the team folder in which the `Parent Folder` will be selected from.

#### Parent Folder

This field allows you to define which folder to create the Paper document in. The list of folders shown in the dropdown are determined by whether if you integrated with Dropbox Paper or Dropbox Paper Business.

* If you integrated with **Dropbox Paper**, then the list of folders will be restricted to your personal folders.

* If you integrated with **Dropbox Paper Business**, then the list of folders will be dependent on the folder selected in the `Namespace` field.

#### Title

This field allows you to define the title of the Paper document. It is defaulted to match the title ( `{{ incident.title }}` ) of your incident. This field supports Liquid syntax.

#### Name

This field is automatically set for you. You can rename this field to whatever best describes your action. The value in this field does not affect how the workflow action behaves.

#### Namespace

This field is **only available if you integrated with Dropbox Paper Business**. This field allows you to select the team folder in which the `Parent Folder` will be selected from.

#### Parent Folder

This field allows you to define which folder to create the Paper document in. The list of folders shown in the dropdown are determined by whether if you integrated with Dropbox Paper or Dropbox Paper Business.

* If you integrated with **Dropbox Paper**, then the list of folders will be restricted to your personal folders.

* If you integrated with **Dropbox Paper Business**, then the list of folders will be dependent on the folder selected in the `Namespace` field.

#### Title

This field allows you to define the title of the Paper document. It is defaulted to match the title ( `{{ incident.title }}` ) of your incident. This field supports Liquid syntax.

## Create an incident

Now you should have a generated **email alias** tied to your team.

## Create an incident

Now you should have a generated **email alias** tied to your team.

Let's send our email!

Let's send our email!

## Specify a severity

Rootly will **automatically detect** the incident **severity** in the mail **subject** and map accordingly:

For example:

* *\[SEV0] Shopping cart is showing empty items* **will be mapped** to severity **SEV0** (if exist in your configuration).

* *\[SEV1] Shopping cart is showing empty items* **will be mapped** to severity **SEV1** (if exist in your configuration).

* *Shopping cart is showing empty items \[SEV0]* **will be mapped** to severity **SEV0** (if exist in your configuration).

* *Shopping cart is showing empty items, this is a sev1* **will be mapped** to severity **SEV1** (if exist in your configuration).

* *Shopping cart is showing empty items* **won't be mapped** to any severity.

## Add emails to the timeline

You can respond to an incident email and we will add it to your timeline for you !

## Specify a severity

Rootly will **automatically detect** the incident **severity** in the mail **subject** and map accordingly:

For example:

* *\[SEV0] Shopping cart is showing empty items* **will be mapped** to severity **SEV0** (if exist in your configuration).

* *\[SEV1] Shopping cart is showing empty items* **will be mapped** to severity **SEV1** (if exist in your configuration).

* *Shopping cart is showing empty items \[SEV0]* **will be mapped** to severity **SEV0** (if exist in your configuration).

* *Shopping cart is showing empty items, this is a sev1* **will be mapped** to severity **SEV1** (if exist in your configuration).

* *Shopping cart is showing empty items* **won't be mapped** to any severity.

## Add emails to the timeline

You can respond to an incident email and we will add it to your timeline for you !

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# FiveTran

Source: https://docs.rootly.com/integrations/fivetran

Fivetran is a no-code data movement platform that allows you to automatically transform data and then send it to your data warehouse.

Rootly provides plenty of built-in, customizable metrics right in the web platform, but if you want to dive even deeper into the data, the Fivetran integration will pull your Rootly data right into your centralized data location.

Fivetran imports these fields:

* Audit

* Cause

* Form fields

* Incidents

* Roles

* Workflows

### Requirements

Fivetran user

Rootly admin or owner

### Installation

[Fivetran's Rootly (Lite) integration guide](https://fivetran.com/docs/connectors/applications/rootly/setup-guide)

### Support

This integration was built by and is actively managed by the Fivetran team. If you have an issue with the data received via the integration, please contact [Fivetran](https://support.fivetran.com/hc/en-us/requests/new) for help.

# Installation

Source: https://docs.rootly.com/integrations/freshdesk/installation

## Why

**Freshdesk** Integration allows you to:

* Creating an incident in **Rootly** will create a ticket or task in **Freshdesk** if you choose to.

* Creating an action item in **Rootly** will create a ticket or task in **Freshdesk** if you choose to.

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# FiveTran

Source: https://docs.rootly.com/integrations/fivetran

Fivetran is a no-code data movement platform that allows you to automatically transform data and then send it to your data warehouse.

Rootly provides plenty of built-in, customizable metrics right in the web platform, but if you want to dive even deeper into the data, the Fivetran integration will pull your Rootly data right into your centralized data location.

Fivetran imports these fields:

* Audit

* Cause

* Form fields

* Incidents

* Roles

* Workflows

### Requirements

Fivetran user

Rootly admin or owner

### Installation

[Fivetran's Rootly (Lite) integration guide](https://fivetran.com/docs/connectors/applications/rootly/setup-guide)

### Support

This integration was built by and is actively managed by the Fivetran team. If you have an issue with the data received via the integration, please contact [Fivetran](https://support.fivetran.com/hc/en-us/requests/new) for help.

# Installation

Source: https://docs.rootly.com/integrations/freshdesk/installation

## Why

**Freshdesk** Integration allows you to:

* Creating an incident in **Rootly** will create a ticket or task in **Freshdesk** if you choose to.

* Creating an action item in **Rootly** will create a ticket or task in **Freshdesk** if you choose to.

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

You can specify the **api key** and which **domain** you want to create tasks in

You can specify the **api key** and which **domain** you want to create tasks in

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Workflows

Source: https://docs.rootly.com/integrations/freshdesk/workflows

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Workflows

Source: https://docs.rootly.com/integrations/freshdesk/workflows

You'll be **prompted name your source**.

You'll be **prompted name your source**.

Follow the instructions present on the Generic webhook creation page to complete setup.

### Note on setting the team, escalation policy or service

There are several options for specifying how an alert can be associated with a service, escalation policy or team.

#### Using the webhook URL

This is the most simple option, allowing for a single service, team or escalation policy to be associated with an alert.

1. Set the `type` and `id` in the webhook url following the default instructions in 'Step 1' to indicate on a per webhook basis what type (`service`, `group` (team) or `escalationPolicy`) and the correct id of service, team or escalation policy.

2.

Follow the instructions present on the Generic webhook creation page to complete setup.

### Note on setting the team, escalation policy or service

There are several options for specifying how an alert can be associated with a service, escalation policy or team.

#### Using the webhook URL

This is the most simple option, allowing for a single service, team or escalation policy to be associated with an alert.

1. Set the `type` and `id` in the webhook url following the default instructions in 'Step 1' to indicate on a per webhook basis what type (`service`, `group` (team) or `escalationPolicy`) and the correct id of service, team or escalation policy.

2.

3. Set the required field 'notification name' in Step 2.

4. Click 'save' then 'I finished this step' buttons

**Note**: *if `type` and `id` are set in the url of Step 1, indicating the `type` and `id` can be skipped in Step 2.*

#### Using the response payload

Alternately, the `type` and `id` can best set after the call is made, by parsing the response payload from your observability provider. *This is a more flexible option allowing multiple types and id's to be set with a single webhook but requires modification of the observability providers payload*.

1. Follow Step 1 instructions, but do not include the type and id in the webhook url ([https://webhooks.rootly.com/webhooks/incoming/generic\_webhooks/notify/](https://webhooks.rootly.com/webhooks/incoming/generic_webhooks/notify/))

2. In your observability provider, update the response payload to include the type (`service`, `group` (team) or `escalationPolicy`) and id (found on each respective objects rootly index page).

1. Example: `{... type: service, type:

3. Set the required field 'notification name' in Step 2.

4. Click 'save' then 'I finished this step' buttons

**Note**: *if `type` and `id` are set in the url of Step 1, indicating the `type` and `id` can be skipped in Step 2.*

#### Using the response payload

Alternately, the `type` and `id` can best set after the call is made, by parsing the response payload from your observability provider. *This is a more flexible option allowing multiple types and id's to be set with a single webhook but requires modification of the observability providers payload*.

1. Follow Step 1 instructions, but do not include the type and id in the webhook url ([https://webhooks.rootly.com/webhooks/incoming/generic\_webhooks/notify/](https://webhooks.rootly.com/webhooks/incoming/generic_webhooks/notify/))

2. In your observability provider, update the response payload to include the type (`service`, `group` (team) or `escalationPolicy`) and id (found on each respective objects rootly index page).

1. Example: `{... type: service, type:  ## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Functionalities

Source: https://docs.rootly.com/integrations/github/functionalities

## Attaching PRs to Incidents

Rootly will automatically detect a PR link and attach it to the incident when an engineer sends the URL in Slack. As the PR is approved and merged, Rootly will automatically communicate the status update in Slack and add an event to the incident's timeline.

## Fetch recent commits

Now a new task is available in your **Genius workflows:**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# Functionalities

Source: https://docs.rootly.com/integrations/github/functionalities

## Attaching PRs to Incidents

Rootly will automatically detect a PR link and attach it to the incident when an engineer sends the URL in Slack. As the PR is approved and merged, Rootly will automatically communicate the status update in Slack and add an event to the incident's timeline.

## Fetch recent commits

Now a new task is available in your **Genius workflows:**

## Pulses

Rootly will automatically add the following GitHub events as pulses:

* Push to any repositories

* Merged pull requests

* More to come...

## Pulses

Rootly will automatically add the following GitHub events as pulses:

* Push to any repositories

* Merged pull requests

* More to come...

## About GitHub secret scanning

GitHub secret scanning protects users by searching repositories for known types of secrets. By identifying and flagging these secrets, our scans help prevent data leaks and fraud.

GitHub partnered with [Rootly](https://rootly.com/ "Rootly") to scan for our tokens and help secure our mutual users on public repositories. Rootly tokens allow users to authenticate against our API to create incidents programmatically for example. GitHub will forward access tokens found in public repositories to Rootly who will notify workspace owners and let them revoke tokens within few a seconds.

GitHub Advanced Security customers can also scan for Rootly tokens and block them from entering their private and public repositories with [push protection](https://github.blog/changelog/2022-04-04-secret-scanning-prevents-secret-leaks-with-protection-on-push/ "push protection").

* [Learn more about secret scanning](https://docs.github.com/en/github/administering-a-repository/about-secret-scanning "Learn more about secret scanning")

* [Partner with GitHub on secret scanning](https://docs.github.com/en/developers/overview/secret-scanning/ "Partner with GitHub on secret scanning")

# GitHub

Source: https://docs.rootly.com/integrations/github/github

## Why

**GitHub** Integration allows you to:

* Fetch recent GitHub commits through workflows.

* Create and update GitHub issues for incidents and action items

* Track code deployment events such as pull requests, approvals, commits, etc. in Pulses.

* Enrich GitHub Prs links you copy into your incident Slack channel and track PR's statuses.

## Permissions

The following permissions are required for this integration:

* **READ** access to checks, code, deployments, metadata, and pull requests.

* **READ** and **WRITE** access to issues.

## Installation

## About GitHub secret scanning

GitHub secret scanning protects users by searching repositories for known types of secrets. By identifying and flagging these secrets, our scans help prevent data leaks and fraud.

GitHub partnered with [Rootly](https://rootly.com/ "Rootly") to scan for our tokens and help secure our mutual users on public repositories. Rootly tokens allow users to authenticate against our API to create incidents programmatically for example. GitHub will forward access tokens found in public repositories to Rootly who will notify workspace owners and let them revoke tokens within few a seconds.

GitHub Advanced Security customers can also scan for Rootly tokens and block them from entering their private and public repositories with [push protection](https://github.blog/changelog/2022-04-04-secret-scanning-prevents-secret-leaks-with-protection-on-push/ "push protection").

* [Learn more about secret scanning](https://docs.github.com/en/github/administering-a-repository/about-secret-scanning "Learn more about secret scanning")

* [Partner with GitHub on secret scanning](https://docs.github.com/en/developers/overview/secret-scanning/ "Partner with GitHub on secret scanning")

# GitHub

Source: https://docs.rootly.com/integrations/github/github

## Why

**GitHub** Integration allows you to:

* Fetch recent GitHub commits through workflows.

* Create and update GitHub issues for incidents and action items

* Track code deployment events such as pull requests, approvals, commits, etc. in Pulses.

* Enrich GitHub Prs links you copy into your incident Slack channel and track PR's statuses.

## Permissions

The following permissions are required for this integration:

* **READ** access to checks, code, deployments, metadata, and pull requests.

* **READ** and **WRITE** access to issues.

## Installation

Click on the `GitHub Marketplace` button.

Click on the `GitHub Marketplace` button.

2️⃣ Search for "*rootly*" and click on the **Rootly** app.

2️⃣ Search for "*rootly*" and click on the **Rootly** app.

Go ahead and click on the `Add` button to begin the installation process.

Go ahead and click on the `Add` button to begin the installation process.

3️⃣ If you're in multiple GitHub organizations, make sure you **select the correct organization** and then click `Install it for free`.

3️⃣ If you're in multiple GitHub organizations, make sure you **select the correct organization** and then click `Install it for free`.

Double check that you've **selected the correct organization**. Check the `Allow my billing information to be linked with this organization` and click `Save`.

Double check that you've **selected the correct organization**. Check the `Allow my billing information to be linked with this organization` and click `Save`.

When ready, click on `Complete order and begin installation`.

When ready, click on `Complete order and begin installation`.

4️⃣ Select the desired **scope of access** and click `Install`.

4️⃣ Select the desired **scope of access** and click `Install`.

5️⃣ Log out of your GitHub account. We will need to re-establish a connection from Rootly in the next step.

5️⃣ Log out of your GitHub account. We will need to re-establish a connection from Rootly in the next step.

6️⃣ Now, let's switch to your Rootly account to complete the installation. Navigate to the **Integrations** page in Rootly via this [link](https://rootly.com/account/integrations "link") and search for "*github*".

6️⃣ Now, let's switch to your Rootly account to complete the installation. Navigate to the **Integrations** page in Rootly via this [link](https://rootly.com/account/integrations "link") and search for "*github*".

7️⃣ You'll be prompted to sign in to GitHub to establish a connection to the correct GitHub organization.

7️⃣ You'll be prompted to sign in to GitHub to establish a connection to the correct GitHub organization.

8️⃣ Click on the `Save` button and you're all set!

8️⃣ Click on the `Save` button and you're all set!

## Uninstall

If you wish to uninstall the integration, make sure that not only GitHub is uninstalled from Rootly, but rootlyhq app is uninstalled in your GitHub account as well.

1️⃣ `Delete` GitHub integration from Rootly by navigating to the GitHub integration modal on the **Integrations** page in Rootly.

## Uninstall

If you wish to uninstall the integration, make sure that not only GitHub is uninstalled from Rootly, but rootlyhq app is uninstalled in your GitHub account as well.

1️⃣ `Delete` GitHub integration from Rootly by navigating to the GitHub integration modal on the **Integrations** page in Rootly.

2️⃣ `Uninstall` the **rootlyhq** app from your organization's **GitHub Apps** page.

2️⃣ `Uninstall` the **rootlyhq** app from your organization's **GitHub Apps** page.

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# GitLab

Source: https://docs.rootly.com/integrations/gitlab

## Why

**GitLab** Integration allows you to:

* Fetch recent **GitLab** commits through **Genius** Workflows

* Track pulses events

* Enrich GitLab Prs links you copy into your incident Slack channel and track PR's statuses.

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or start a chat by navigating to **Help > Chat with Us**.

# GitLab

Source: https://docs.rootly.com/integrations/gitlab

## Why

**GitLab** Integration allows you to:

* Fetch recent **GitLab** commits through **Genius** Workflows

* Track pulses events

* Enrich GitLab Prs links you copy into your incident Slack channel and track PR's statuses.

## Installation

You can setup this integration as a **logged in admin user** in the integrations page:

Let's create an OAuth2 application:

Let's create an OAuth2 application:

Enter the following information:

* **redirect\_uri**: [https://rootly.com/auth/gitlab/callback](https://rootly.com/auth/gitlab/callback)

* **scopes**: `api` or `read_api`

Enter the following information:

* **redirect\_uri**: [https://rootly.com/auth/gitlab/callback](https://rootly.com/auth/gitlab/callback)

* **scopes**: `api` or `read_api`

Copy **Application ID** and **secret** into Rootly:

Copy **Application ID** and **secret** into Rootly:

And you are all set!

## Fetch recent commits

Now a new task is available in your **Genius workflows:**

### Pulses

Rootly will automatically add the following Gitlab events as pulses:

* Push to any repositories

* Merged pull requests

* More to come...

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support

If you need help or more information about this integration, please contact [support@rootly.com](mailto:support@rootly.com) or use the **lower right chat widget** to get connected with an engineer.

# Installation

Source: https://docs.rootly.com/integrations/google-calendar/installation

## Installing Google Calendar on Rootly

And you are all set!

## Fetch recent commits

Now a new task is available in your **Genius workflows:**

### Pulses

Rootly will automatically add the following Gitlab events as pulses:

* Push to any repositories

* Merged pull requests

* More to come...

## Uninstall

You can **uninstall** this integration in the integrations panel by clicking **Configure > Delete**

## Support